Author: adamgdunn

-

On the value of deplatforming, and seeing online misinformation as an opportunity to counter misinformed beliefs in front of a key audience

-

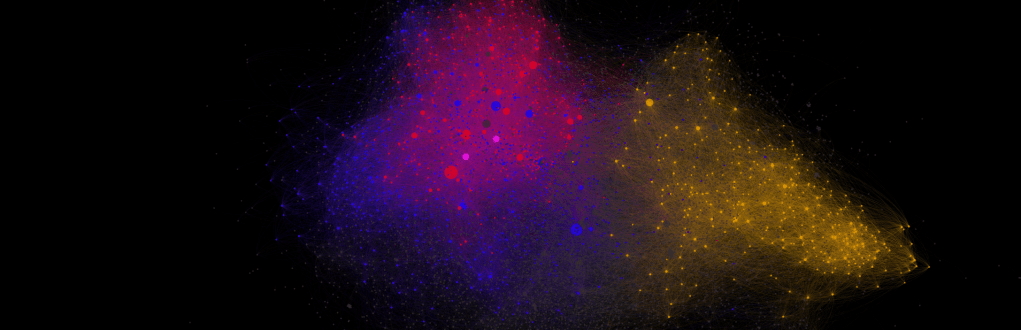

Do Twitter bots spread vaccine misinformation?

-

trial2rev: seeing the forest for the trees in the systematic review ecosystem

-

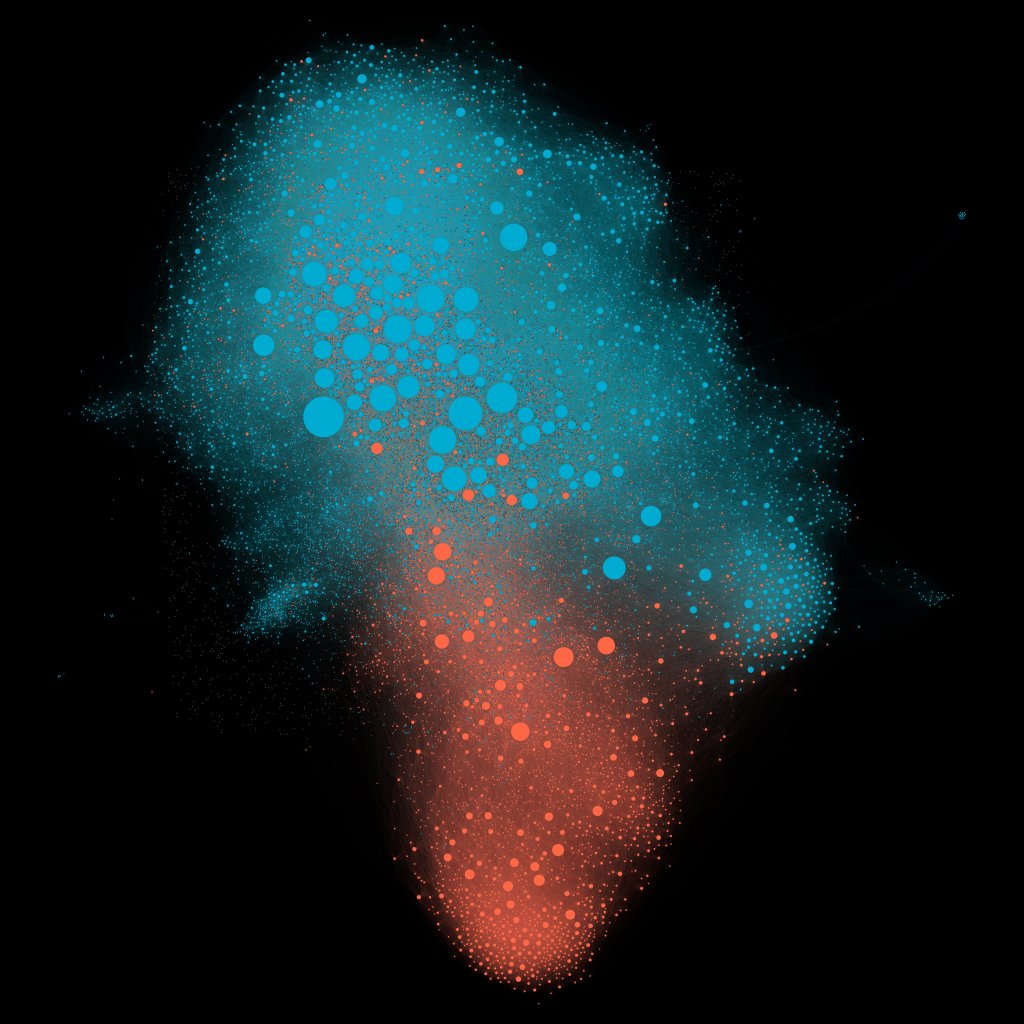

How articles from financially conflicted authors are amplified, why it matters, and how to fix it.

-

Thinking outside the cylinder: on the use of clinical trial registries in evidence synthesis communities

-

Differences in exposure to negative news media are associated with lower levels of HPV vaccine coverage